Characterizing and Improving the Reliability of Brain Connectivity extracted with Magnetic Resonance Imaging

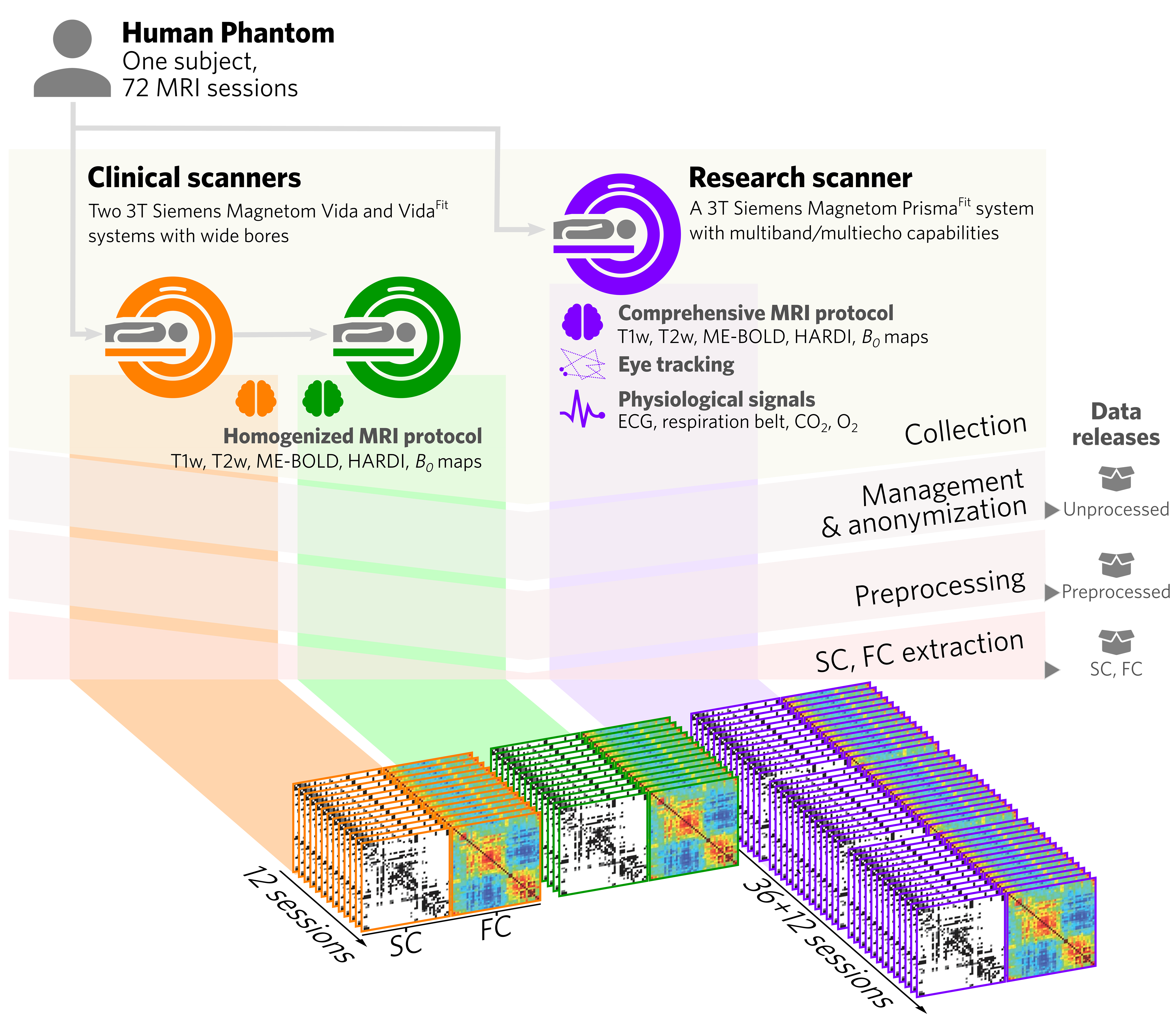

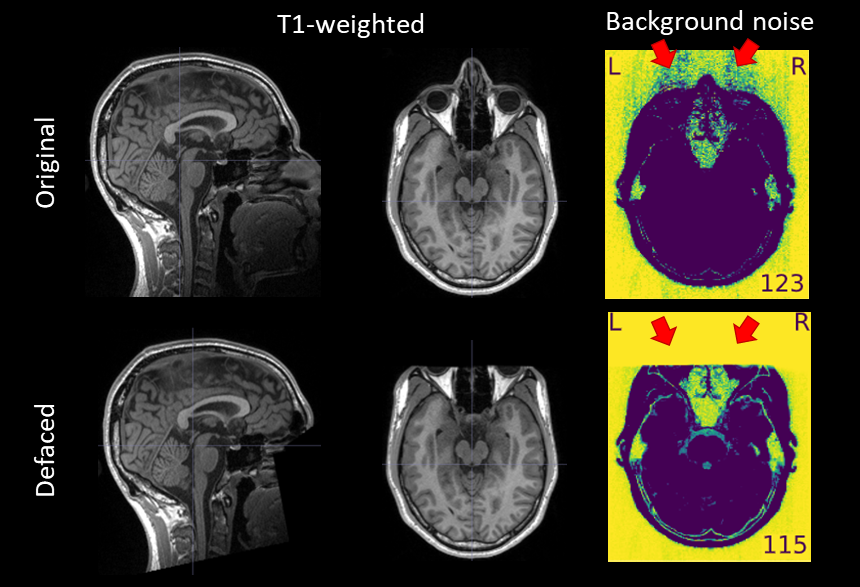

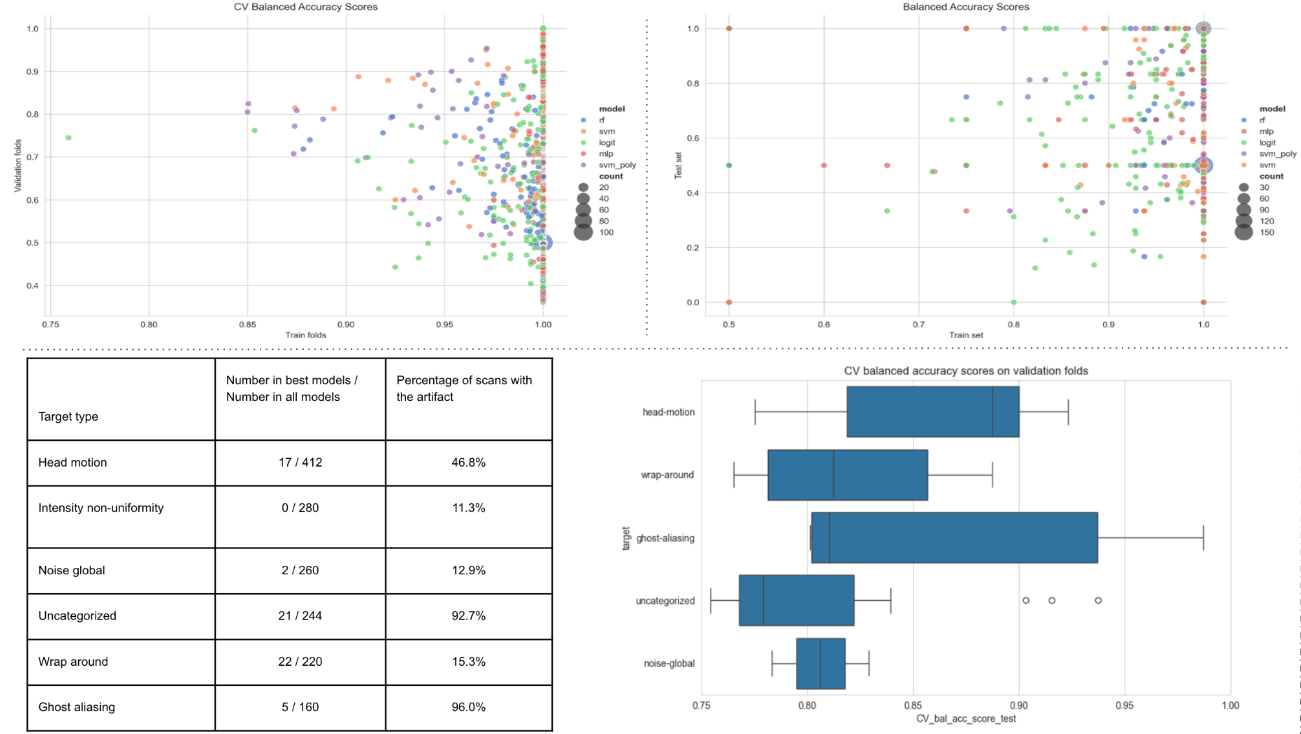

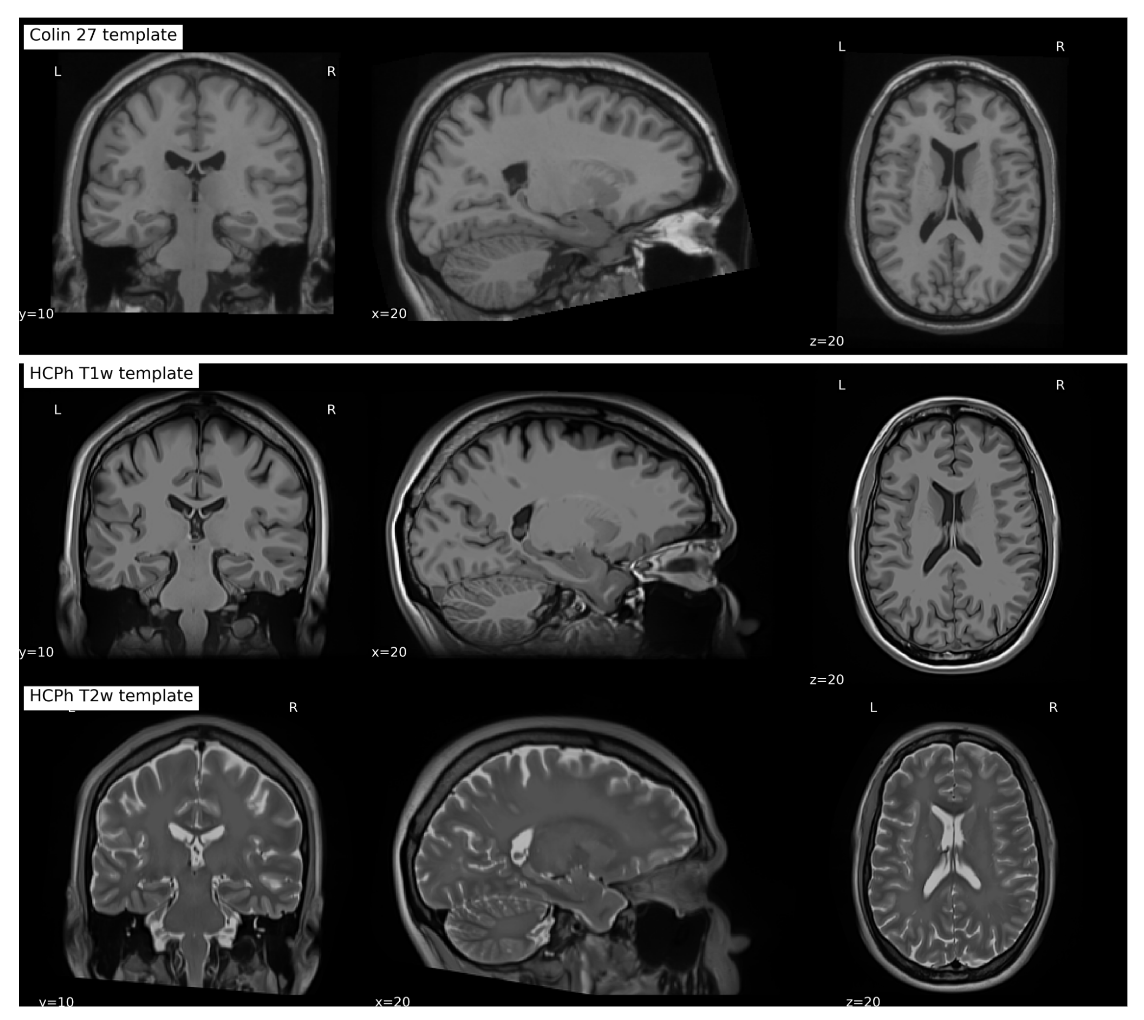

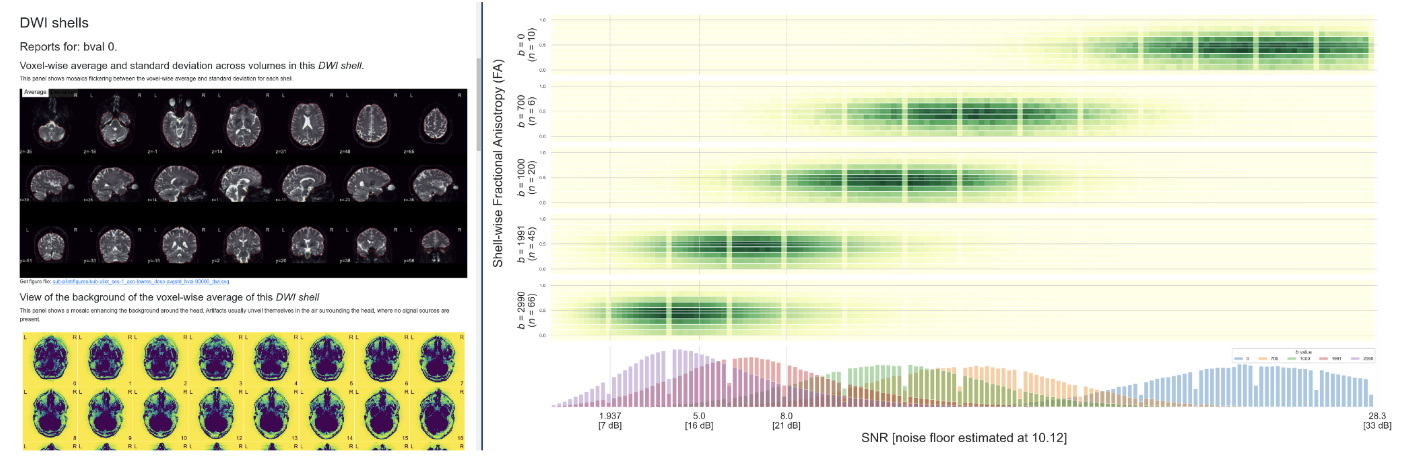

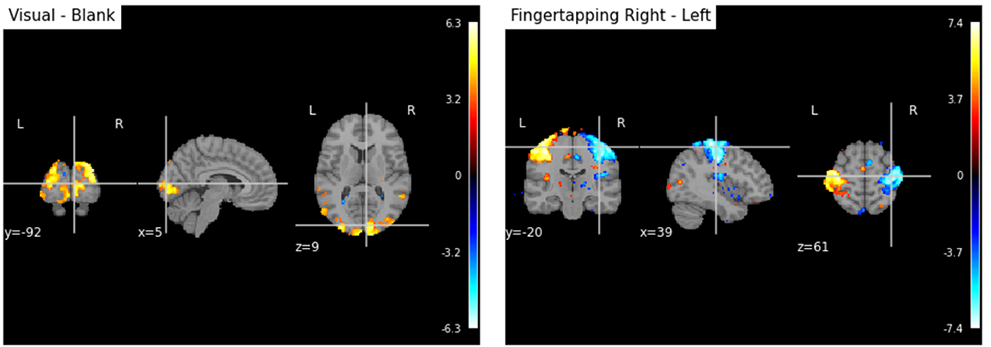

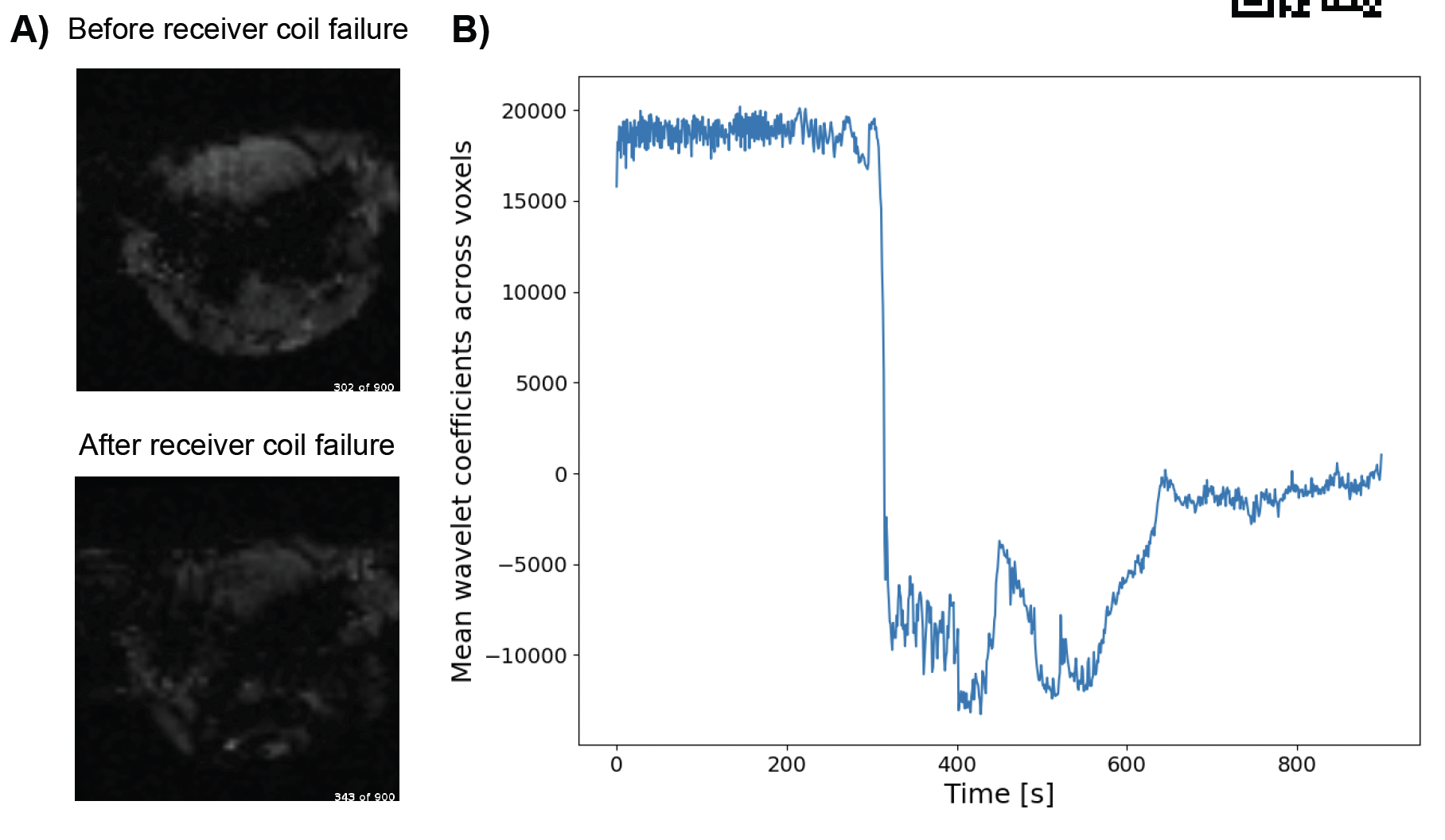

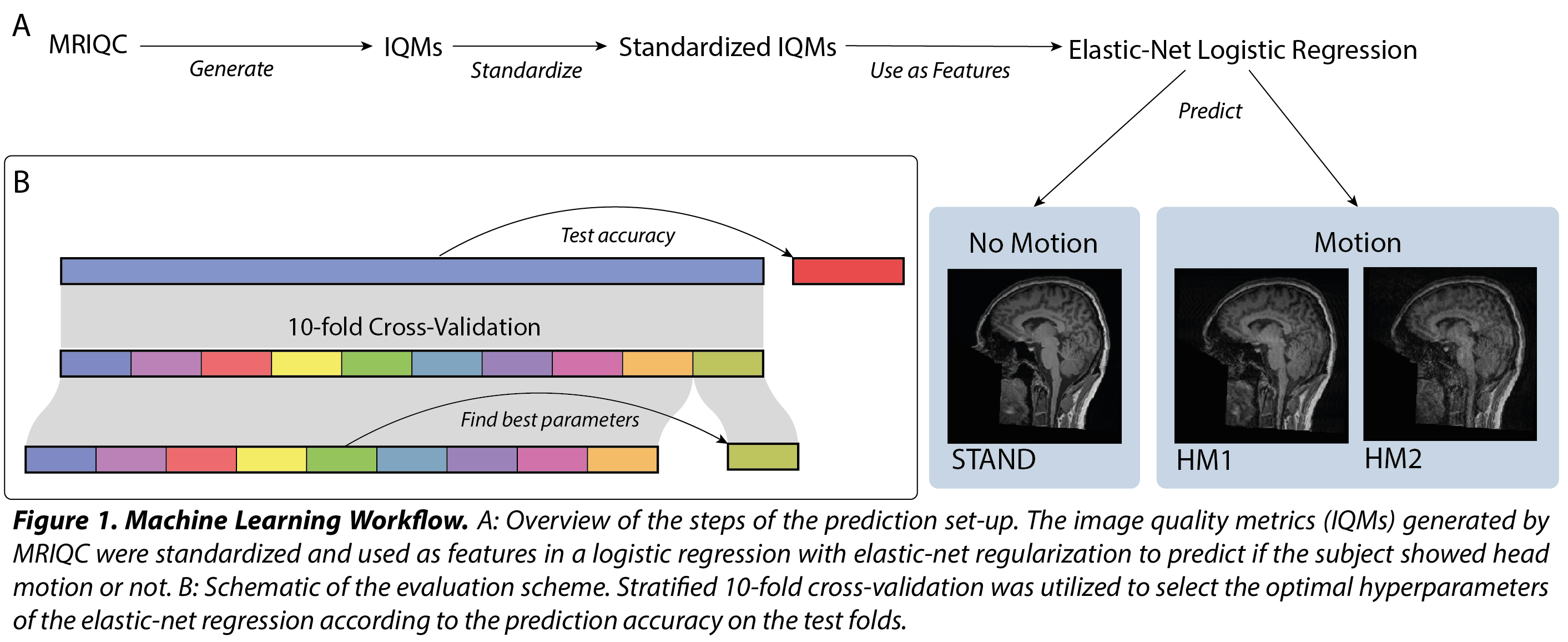

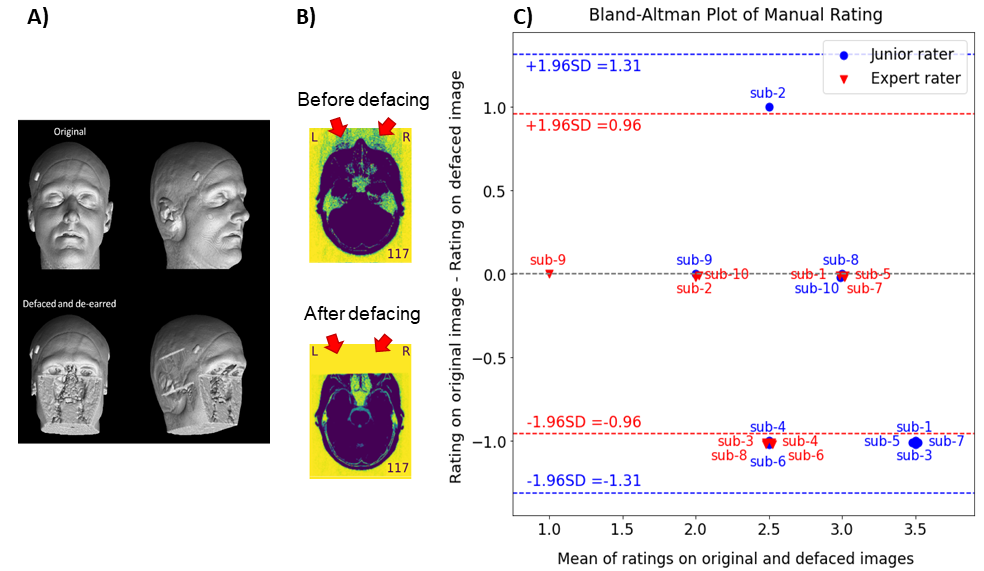

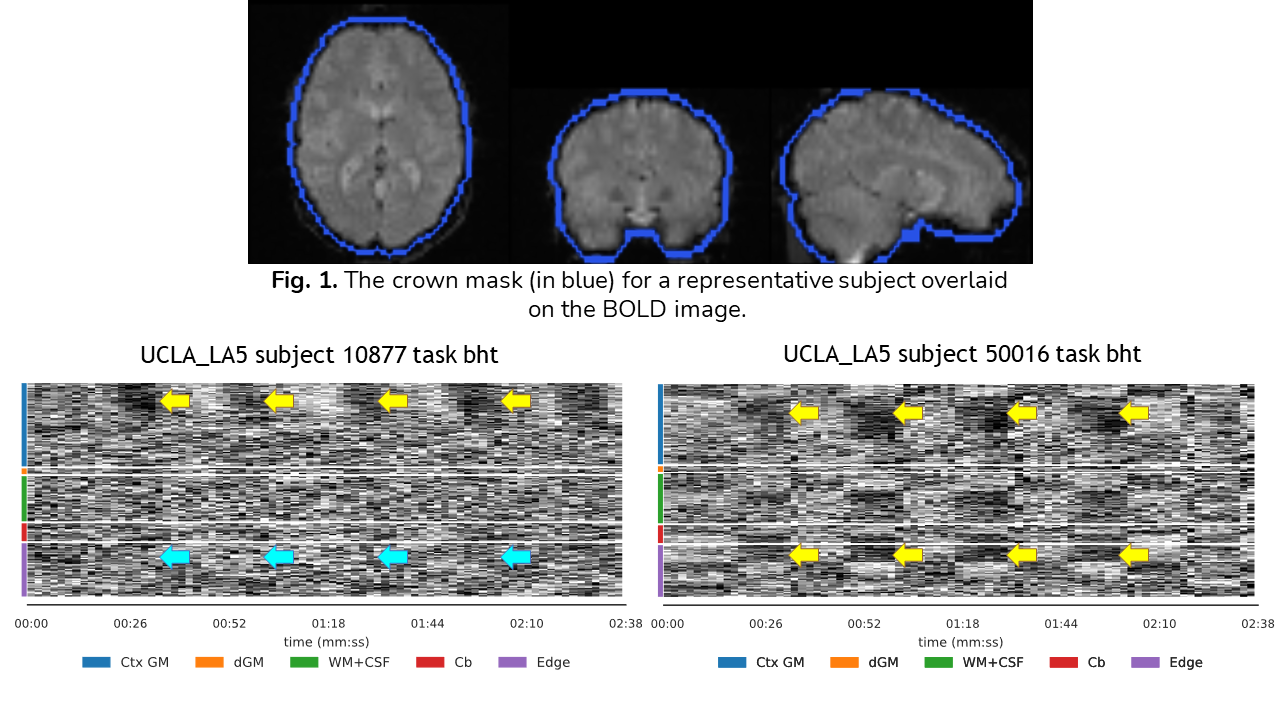

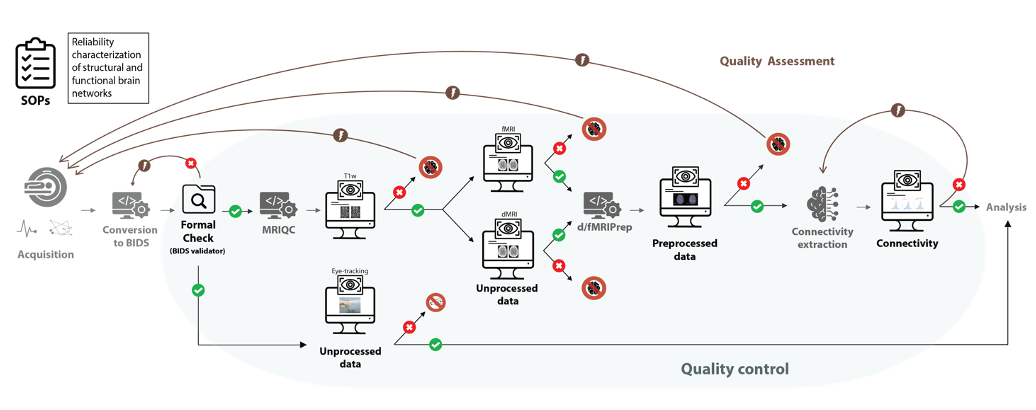

Connectivity analysis in neuroimaging has grown immensely over the last two decades as understanding structural and functional networks has provided great insights into brain function, structure, and disease. Although MRI is a versatile imaging modality to explore these networks, the indirect nature of MRI measurements and its sensitivity to numerous noise sources pose substantial challenges to obtaining reliable measurements. Additionally, to extract structural and functional connectivity from the noise, numerous processing steps are required, which has led to the development of a wealth of neuroimaging workflows, each with its own merits but also methodological nuances. This extensive methodological flexibility further undermines the reliability of brain connectivity results. Collectively, these issues restrict the translation of network-based biomarkers into clinical applications. In this thesis, we aimed to address these challenges by proposing a framework for characterizing and improving the reliability of brain connectivity estimates. We approach the broad and multifaceted problem of reliability characterization by decomposing it into three levels: (1) participant-level variability, (2) scanner-level variability, and (3) data processing-level variability. Moreover, we present four approaches to increasing reliability: redundancy, standardization, quality assurance and quality control (QA/QC), and registered reports. The first project presents how we applied the principles of redundancy and standardization to establish a comprehensive and robust QA/QC protocol. In addition to presenting the protocol, our paper highlighted the importance of setting up several checkpoints throughout the workflow and adapting QA/QC procedures to the specifics of the studies. That protocol, combined with our dataset quality annotations, serves as a stepping stone towards developing automatic algorithms to complement visual QA/QC, alleviating researchers’ workload and correcting human-related inconsistencies. We encourage other researchers to use our protocol as a solid foundation to adapt to their own needs. In our second study, we leveraged our standardized QA/QC protocol to investigate the impact of defacing—a necessary step to protect participant privacy when sharing data publicly—on perceived image quality. We found that defacing significantly altered human perception of quality, suggesting that performing QA/QC on defaced data is suboptimal as it risks failing to remove subpar images that could subsequently bias the results. We therefore advise future studies to perform QA/QC before defacing or any other step that could alter the data integrity. Moreover, QA/QC materials extracted from unaltered data should be included when publicly sharing datasets. Machine perception, on the other hand, was found unaffected by defacing because MRIQC did not take into account areas typically altered by defacing. If the field is to follow the recommendations above, future development of QA/QC tools such as MRIQC should correct this weakness. Building on these principles of redundancy, standardization, and the importance of QA/QC, our third study introduced the Human Connectome Phantom (HCPh) dataset, a novel dataset designed to address key challenges in connectivity reliability. The dataset features repeated scans of a single individual across three scanners using multi-echo functional MRI (fMRI) and multi-shell diffusion MRI. This design provides the unique opportunity to characterize the interscanner reliability of both functional and structural connectivity within a unified framework. The sessions on one scanner also incorporated extensive physiological monitoring and acquired the resting-state fMRI using a naturalistic paradigm to enable a detailed examination of intrascanner variability. We encourage the neuroimaging community to leverage this extremely valuable dataset to evaluate the reliability of a vast range of modeling methods whose reliability could not be characterized before. This dataset also offers unique opportunities to develop new methodologies to remove fMRI signal fluctuations from non-neural sources more efficiently. Overall, this thesis paves the way for improving and better characterizing the reliability of functional and structural connectivity, thereby deepening our understanding of their feasibility as biomarkers.

Can Replaying a Movie Clip Reduce Variability in Single-Subject Resting-State fMRI?In 31st Annual Meeting of the Organization for Human Brain Mapping (OHBM) in Brisbane , Jun 2025

Can Replaying a Movie Clip Reduce Variability in Single-Subject Resting-State fMRI?In 31st Annual Meeting of the Organization for Human Brain Mapping (OHBM) in Brisbane , Jun 2025 Physiopy: a Python suite for handling physiological data recorded in neuroimaging settingsIn 30th Annual Meeting of the Organization for Human Brain Mapping (OHBM) in Seoul, Jun 2024

Physiopy: a Python suite for handling physiological data recorded in neuroimaging settingsIn 30th Annual Meeting of the Organization for Human Brain Mapping (OHBM) in Seoul, Jun 2024 QA/QC of Anatomical, Functional, and Diffusion MRI Data of the Human Connectome Phantom (HCPh) Dataset with MRIQC and fMRIPrepIn Annual Meeting of the International Society for Magnetic Resonance in Medicine (ISMRM) in Singapore , May 2024

QA/QC of Anatomical, Functional, and Diffusion MRI Data of the Human Connectome Phantom (HCPh) Dataset with MRIQC and fMRIPrepIn Annual Meeting of the International Society for Magnetic Resonance in Medicine (ISMRM) in Singapore , May 2024